Integrating Trustworthiness, Lifecycle Stages, and Stakeholder Roles: A Framework for Addressing AI’s Data-Driven Challenges and Ensuring Ethical Deployment with Actionable Insights for Risk Mitigation and Regulatory Compliance Across Sectors

Challenges in AI Governance and Their Significance

Artificial intelligence systems have advanced beyond prototypes to support essential functions in sectors such as finance, healthcare, and logistics. These systems differ from traditional software through their data-driven learning, continuous adaptation, and probabilistic outputs, introducing governance issues like model drift, bias, opacity, and privacy risks.

Incidents illustrate these challenges: Google’s Photos app mislabeled individuals due to biased training data; IBM’s Watson for Oncology provided unsafe recommendations from limited inputs; Microsoft’s Tay chatbot produced harmful content following manipulation. Such events undermine trust and highlight potential harm in critical applications.

Global responses include standards like the OECD AI Principles (2019), UNESCO’s Ethics Recommendation (2021), NIST’s AI Risk Management Framework (2023), the EU AI Act (2024), and NIST’s Generative AI Profile (2024), focusing on transparency, robustness, and accountability. The task for organizations is to implement these principles effectively.

Maikel Leon’s framework addresses AI governance as a sociotechnical issue, encompassing technology, organizational processes, and human factors to ensure responsible system behavior.

Methodological Approach and Framework Innovations

Leon employs a sociotechnical perspective, viewing AI trustworthiness as emerging from interactions between technical and social elements. This is complemented by normative ethics and risk management to define trustworthiness dimensions.

The methodology involves comparative synthesis of governance instruments, including the OECD Recommendation, UNESCO’s Ethics Recommendation, EU AI Act, NIST AI RMF 1.0, NIST Generative AI Profile, ISO/IEC 42001, ISO/IEC 23894, and IEEE’s Ethically Aligned Design, alongside peer-reviewed literature. Iterative coding identifies themes, leading to a unified model.

The framework includes:

- A taxonomy of trustworthiness attributes: robustness, reliability, explainability, fairness, privacy, transparency, and accountability, with associated techniques.

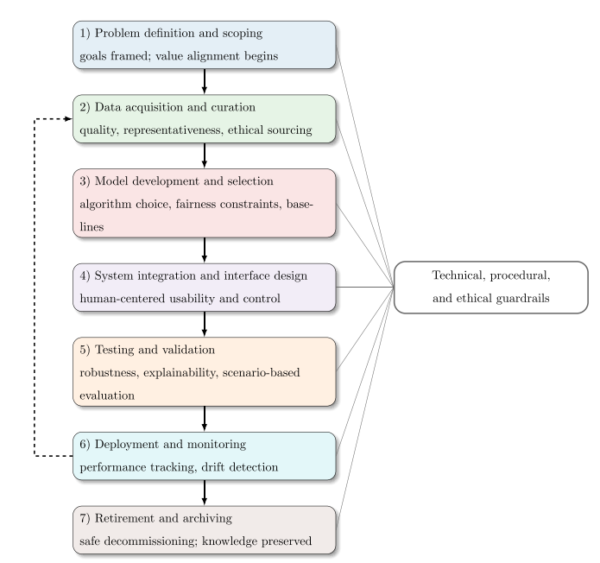

- A seven-stage AI lifecycle model with guardrails.

- A stakeholder responsibility matrix.

Key Findings and Conclusions

The research presents an integrated framework where trustworthiness emerges as a multidimensional property, achieved by combining key attributes, a structured lifecycle, and defined stakeholder roles. This approach highlights the need for continuous governance to manage AI’s dynamic nature.

A core finding is the taxonomy of trustworthiness attributes, each paired with practical techniques for implementation. For instance, robustness can be enhanced through adversarial training to handle input variations; reliability involves setting performance baselines and fallback options for consistent outputs; explainability uses tools like SHAP or LIME to provide understandable justifications; fairness requires bias detection and reweighting to ensure equitable treatment; privacy employs differential privacy or federated learning to protect data; transparency relies on datasheets and impact assessments for clear communication; and accountability is supported by audit trails and incident reporting. The framework notes that these attributes involve trade-offs, such as balancing explainability with model accuracy, which organizations can navigate by prioritizing based on system risk levels.

The seven-stage AI lifecycle model stands out as a practical innovation, designed to address AI’s ongoing evolution unlike static software cycles. It starts with problem definition, incorporating ethical impact assessments to align objectives with societal values. Data collection emphasizes consent and bias checks for quality inputs. Model development integrates interpretable designs and fairness constraints. Validation establishes benchmarks through stress testing. Deployment includes human-in-the-loop mechanisms for oversight. Monitoring tracks drift and triggers retraining via feedback loops. Retirement ensures knowledge preservation through archiving and ethical reviews. In practice, this model allows engineers to embed checks at each stage, such as using automated pipelines for drift detection, making it adaptable for real-world deployments.

Stakeholder responsibilities are clarified in a matrix to avoid accountability gaps. Providers handle secure infrastructure; producers verify and document models; users interpret outputs and provide feedback; regulators enforce standards; auditors assess compliance; and affected communities contribute insights. This distribution supports collaborative governance.

The historical timeline traces the field’s progression from principles to enforceable standards, while the regulatory comparison identifies common themes like risk management and oversight across frameworks, aiding organizations in compliance mapping. A particularly interesting result is demonstrated through case vignettes, showing the framework’s practical utility in revealing and mitigating risks. In healthcare, a risk-stratification algorithm biased against Black patients—due to using spending as a proxy for need—is analyzed: the framework identifies fixes like fairness constraints in model training and ongoing disparity monitoring. In finance, a credit algorithm’s gender disparities prompt recommendations for protected-attribute checks and audit trails from inception. These examples illustrate how the framework guides interventions in high-risk domains.

In conclusion, the framework underscores that trustworthiness requires embedding ethics and controls throughout the lifecycle, reinforced by organizational behavior. As Leon states: “By aligning technical excellence with ethical imperatives and regulatory compliance, organizations can harness the transformative potential of AI while earning public trust.”

Implications and Future Directions

This framework offers organizations a structured approach for AI deployment in varied contexts. Future research should focus on unified metrics for trustworthiness, machine-checkable policies for cross-jurisdictional compliance, risks in generative and multimodal AI, composability of ethical constraints, and longitudinal studies on drift and human-AI collaboration.

Full reference for the original research:Leon, M. (2026). Lifecycle-Based Governance to Build Reliable Ethical AI Systems. Systems Research and Behavioral Science. https://doi.org/10.1002/sres.3014