Enhancing Photogrammetric Accuracy: ELISAC Improves Inlier Detection by Up to 57%, Reduces Computation Times, and Yields More Detailed DSMs from UAV Imagery in Forested Environments, Overcoming Spectral and Textural Similarities That Challenge Conventional Algorithms, Essential for Precise 3D Modeling Applications.

Outlier Challenges in UAV Image Matching: Issues and Significance

Matching corresponding points across overlapping images is a core process in photogrammetry and computer vision, crucial for applications such as 3D model generation, digital surface models (DSMs), image registration, triangulation, change detection, and mosaicking. However, this process frequently produces mismatched points, or outliers, due to factors like noise, rotations, scale variations, and differing baselines. Conventional algorithms, such as Random Sample Consensus (RANSAC), often yield suboptimal results, including reduced inliers, elevated false positives, excessive iterations, and extended processing times. These shortcomings arise from random sampling, noise, and initial assumption errors.

The issue is particularly pronounced with UAV imagery, where nonmetric cameras prevalent in most drones generate super-high-resolution images at centimetric scales, rendering traditional photogrammetry less effective. In forested regions, spectral and textural similarities among trees exacerbate outlier generation, leading to inaccurate sparse and dense point clouds and unreliable DSMs. The importance of resolving this lies in the fact that a greater number of inliers bolsters triangulation and bundle block adjustment, resulting in denser, more uniformly distributed point clouds and accurate DSMs. For professionals in environmental monitoring, urban planning, or forestry such as tree height estimation or change detection, these inaccuracies can undermine project reliability and efficiency.

Outlier rejection techniques encompass handcrafted methods like M-estimators or Hough transforms, alongside deep learning approaches. Nevertheless, deep learning frequently depends on RANSAC for final refinement, and research indicates that optimized RANSAC variants continue to surpass neural networks in managing viewpoint shifts, shadows, repetitive patterns, and generalization. Improving these limitations is vital to maximize UAV data utility in deriving high-quality 3D information from various sensors.

ELISAC’s Methodological Enhancements to RANSAC

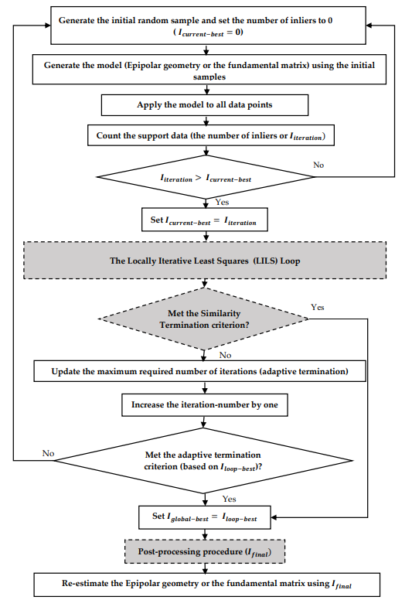

The research presents Empowered Locally Iterative Sample Consensus (ELISAC), an adaptation of the M-estimator Sample Consensus (MSAC), which refines hypothesis evaluation by assigning values to inliers and outliers. ELISAC integrates three adaptable enhancements to improve inlier numbers, stability, convergence, and output reliability.

The Locally Iterative Least Squares (LILS) loops address RANSAC’s failure to incorporate newly identified inliers for model refinement during processing. The Basic LILS utilizes all inliers from an iteration, employs a least-squares adjustment to update parameters via collinearity equations, and reevaluates against all points until no further inliers are found. This is complemented by an Adaptive Termination (AT-Basic) that adjusts maximum iterations dynamically based on the optimal inlier set. The Aggregated LILS extends this by combining inliers from current and previous loops, enhancing convergence through an AT-Improved criterion, using simple aggregation without weighting.

Additionally, the Similarity Termination (ST) criterion terminates processing when inlier overlap between successive iterations exceeds 95%, minimizing unnecessary computations without additional parameters and optimizing local and global searches. Finally, a post-processing procedure (PPP) applies a concluding LILS step to eliminate residual outliers, ensuring reliability with negligible added cost. The refined inliers then reestimate the model using collinearity equations or the fundamental matrix.

Validation employed Scale-Invariant Feature Transform (SIFT) for feature extraction, collinearity equations for hypotheses, and Sampson distance (0.3 pixels, 95% confidence) for errors. Two configurations were assessed: Basic LILS with AT-Basic, ST, and PPP; and Aggregated LILS with AT-Improved, ST, and PPP. Datasets included a computer vision benchmark with six pairs involving rotations, scales, and viewpoints, and UAV forest images with varying overlaps and baselines. Metrics encompassed inlier averages, ranges, RMSE across 100 runs, and computation times relative to MSAC. For DSM evaluation, Aggregated LILS outputs generated point clouds and DSMs, compared to Agisoft via CloudCompare.

Results and Conclusions

The experiments confirmed ELISAC’s advantages over MSAC. In the computer vision dataset, Basic LILS produced 1.12 to 1.57 times more inliers, with computation times 0.04 to 0.28 of MSAC’s. Aggregated LILS achieved 0.93 to 1.13 times more inliers at 0.05 to 0.38 times the duration. RMSE values indicated improved stability. For UAV data, both variants yielded at least 10% more inliers, up to 1.3 times, with times generally below MSAC’s, except in one high-inlier instance. Sparse point clouds from ELISAC were denser (e.g., 4870 vs. 3306 points in one pair), and although MATLAB constraints reduced dense cloud points, inspections showed better tree detection in forests. DSMs from ELISAC were more detailed, identifying trees overlooked by Agisoft, though smoother overall.

As the authors conclude: “the results demonstrated that the proposed methods could find more inliers with a faster computational time with respect to the MSAC algorithm.” And, “our methods were able to detect and extract more single trees than Agisoft, which could be a direct result of finding more inliers.” ELISAC thus refines RANSAC by increasing inliers, stability, and speed while reducing outliers.

Implications and Future Potential

ELISAC’s effectiveness in spectrally uniform terrains suggests applicability in varied domains, including agriculture and disaster management, with potential for integration with deep learning.

We thank Bahram Salehi, Sina Jarahizadeh, and Amin Sarafraz for advancing photogrammetry. For discussions on ELISAC applications or collaborations, contact them at sjarahizadeh@esf.edu.

Reference: Salehi, B.; Jarahizadeh, S.; Sarafraz, A. An Improved RANSAC Outlier Rejection Method for UAV-Derived Point Cloud. Remote Sens. 2022, 14, 4917.